AI is here.

And it’s not just another tool; it’s a fundamental platform shift. Think of it like the dawn of the internet or the invention of electricity. Everything changes. But what happens when the bedrock of this new digital world – our data – is riddled with cracks? That’s precisely the problem AdTech Beat is diving into today, inspired by the discussions at the recent MarTech Conference.

The gamble of weak data

Imagine trying to build a skyscraper on quicksand. That’s the reality for many marketing teams today. Their bold campaigns, their cutting-edge AI initiatives, their entire digital strategy – it all feels like a high-stakes gamble because the underlying data is messy, siloed, and untrustworthy. It’s the constant, behind-the-scenes struggle of syncing systems, reconciling spreadsheets that look like abstract art, and the sheer pressure to achieve perfection in a world designed for imperfection. It’s exhausting, and frankly, it’s holding back innovation.

Why does this matter for AI adoption?

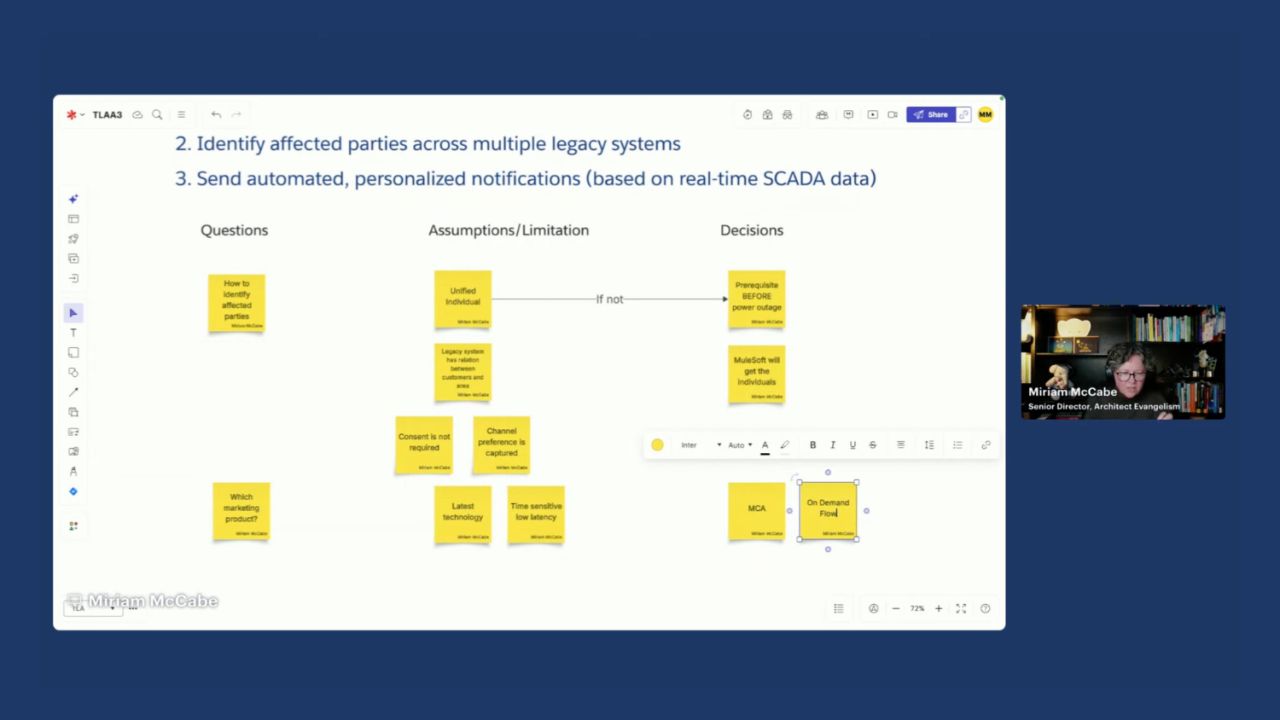

This is where the concept of a “confidence layer” comes into play, a crucial theme from the MarTech Conference session, “The confidence layer: Building data foundations marketers can trust.” It’s about more than just collecting data; it’s about architecting it with intention, building a system so strong and reliable that every marketer, from the analyst deep in the weeds to the executive suite, can trust it implicitly. When your data is clean, accessible, and unified – when it’s got that “confidence layer” – that’s when AI and automation don’t just support marketing; they empower it. They become engines of growth, not sources of frustration.

Bridging the technical and the creative

The panel at the MarTech Conference, featuring luminaries like Cyndi Greenglass, Ben Vigneron, Stephen Williams, and Josh Wilson, is aiming to bridge that chasm. They’re not just talking about technical debt; they’re talking about transforming it into a pathway for faster, smarter risks. This isn’t just about IT fixing things; it’s about empowering the entire marketing engine. When you have shared visibility, when your data is a single source of truth, then your marketing, sales, and leadership teams are all rowing in the same direction. It’s about making data a strategic asset, not a perpetual bottleneck.

When marketing potential feels limited by back-end friction, the solution is a stronger foundation.

This is the kind of thinking that separates the innovators from the imitators. It’s about understanding that the future of marketing isn’t just about creative ideas; it’s about the sophisticated infrastructure that brings those ideas to life at scale. And that infrastructure, at its heart, is built on trust – trust in the data that fuels every decision.

My Unique Insight: The AI Confidence Layer as the New Operating System

We’ve seen platform shifts before. The shift to mobile. The shift to cloud. This AI shift feels different, more foundational. And while everyone’s rightly focused on the dazzling new models and generative capabilities, the real unsung hero – the true bedrock of this AI era – is the data confidence layer. Think of it as the operating system for AI-driven marketing. Without a stable, trustworthy OS, no matter how powerful the applications (the AI models), the whole system crashes or, at best, performs erratically. This isn’t just about cleaner spreadsheets; it’s about building the fundamental trust that will allow AI to move from a fascinating experiment to the engine of everyday business.

🧬 Related Insights

- Read more: Trade Desk Bets Big on Dollar General Retail Media

- Read more: Connected TV Advertising: A Complete Guide to CTV and OTT Ads

Frequently Asked Questions

What is a data confidence layer? A data confidence layer refers to the processes, technologies, and governance put in place to ensure marketing data is accurate, accessible, unified, and reliable, building trust for its use in campaigns and AI.

Will this help with AI adoption? Absolutely. Trustworthy data is the fuel for effective AI and automation. Without it, AI models can produce inaccurate or misleading results, hindering adoption and impact.